Performance Troubleshooting

Troubleshoot Seq server performance and responsiveness

If Seq query performance is lagging, this page will help you track down the issue or collect the information our Support Team will need to help you out.

Understanding Seq Performance

Seq has an in-memory event store architecture geared towards rapid and flexible querying of recent log data, with older data available through slower "archive" queries.

This optimizes for the expected usage profile of diagnostic investigations, which are usually driven by ad-hoc queries on recent events.

Seq will use as much available RAM as possible for its internal cache. High RAM usage is not normally indicative of an issue. Seq will return memory to the system if overall system memory use increases.

Seq performs best when the ingested data and retention policies are set so that most queries are served from RAM. The goal of Seq performance tuning is initially to maximize the data available through the fast, in-memory cache.

Investigating Performance Problems

Cache Warm-up

After a server/service restart, especially on machines with limited I/O, it can take a while for the event cache to be fully populated. Performance will pick up in under ten minutes on an adequate machine Seq will show a yellow "warning" triangle in the navigation bar until this process completes.

If cache warm-up is a bottleneck, retaining less data or moving the machine to faster (SSD) storage is generally recommended.

Ingestion Rate

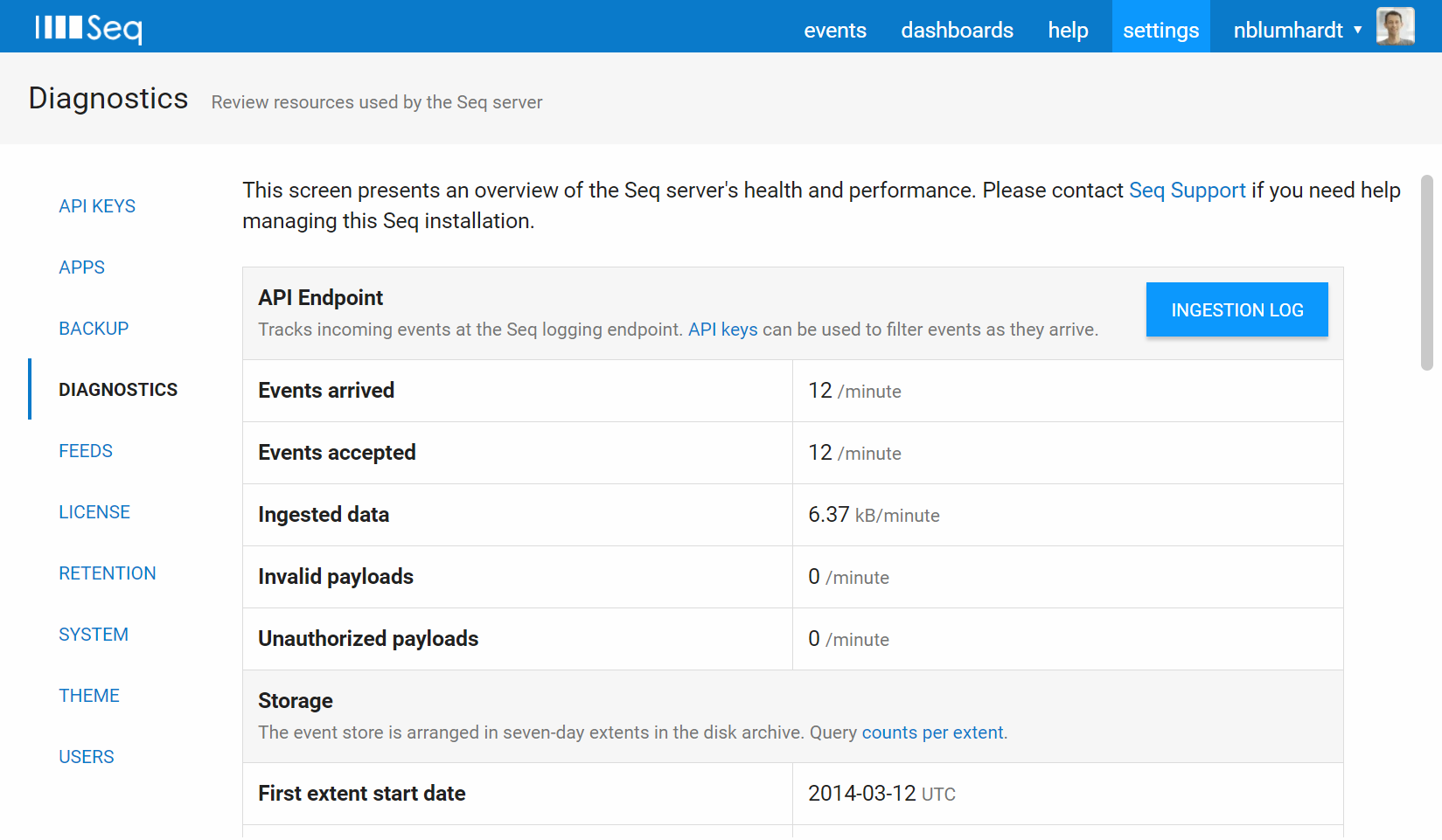

Seq exposes various metrics through the Settings > Diagnostics page in Seq itself.

This page includes information about the Seq process and the machine it is running on.

The various ingestion-related counters show the number of events arriving at Seq. If this number is unexpectedly high, you might have a runaway process logging more data than intended.

The best way to control ingestion in Seq is through API keys; they can not only provide fine-grained information about where events are coming from, but can also be used to apply levels and filters independently to the log sources sending data to Seq.

System Requirements

Check the System Requirements documentation for some rule-of-thumb pointers to appropriate hardware sizing.

We highly recommend provisioning SSD storage for production Seq servers expected to handle any significant load.

Log data is messy, complex, and voluminous. Giving your log server a little extra CPU, RAM and I/O capacity can help it dig through events more effectively.

Retention Policies

The ideal retention policy configuration removes bulky data while it still fits within the Seq RAM cache.

So, if you have no retention policies active, and the RAM cache covers 30 days (see Settings > Diagnostics, aim to remove bulky data before the 30 day mark.

A typical retention scheme may look something like:

- On ingestion, remove debug-level events by setting a level on the appropriate API key (or configuring the source application not to log debug-level events)

- At 7 days, remove tracing information, for example, logged HTTP requests/paths/status codes

- At 30 days, remove all information that's not used in long-term tracking (i.e. doesn't contribute to charts/reports that will be viewed over a long time period)

The more complex your environment, the more opportunities to use fine-grained retention policies to improve performance.

Event Structure

The size and shape of events has a substantial impact on how efficiently Seq can work with them.

- Seq is designed for regular log events - don't serialize large objects into log events that will be recorded in production

- Prefer attaching properties rather than concatenating contextual information into messages

See this article for some more tips on writing structured events effectively.

Getting Help

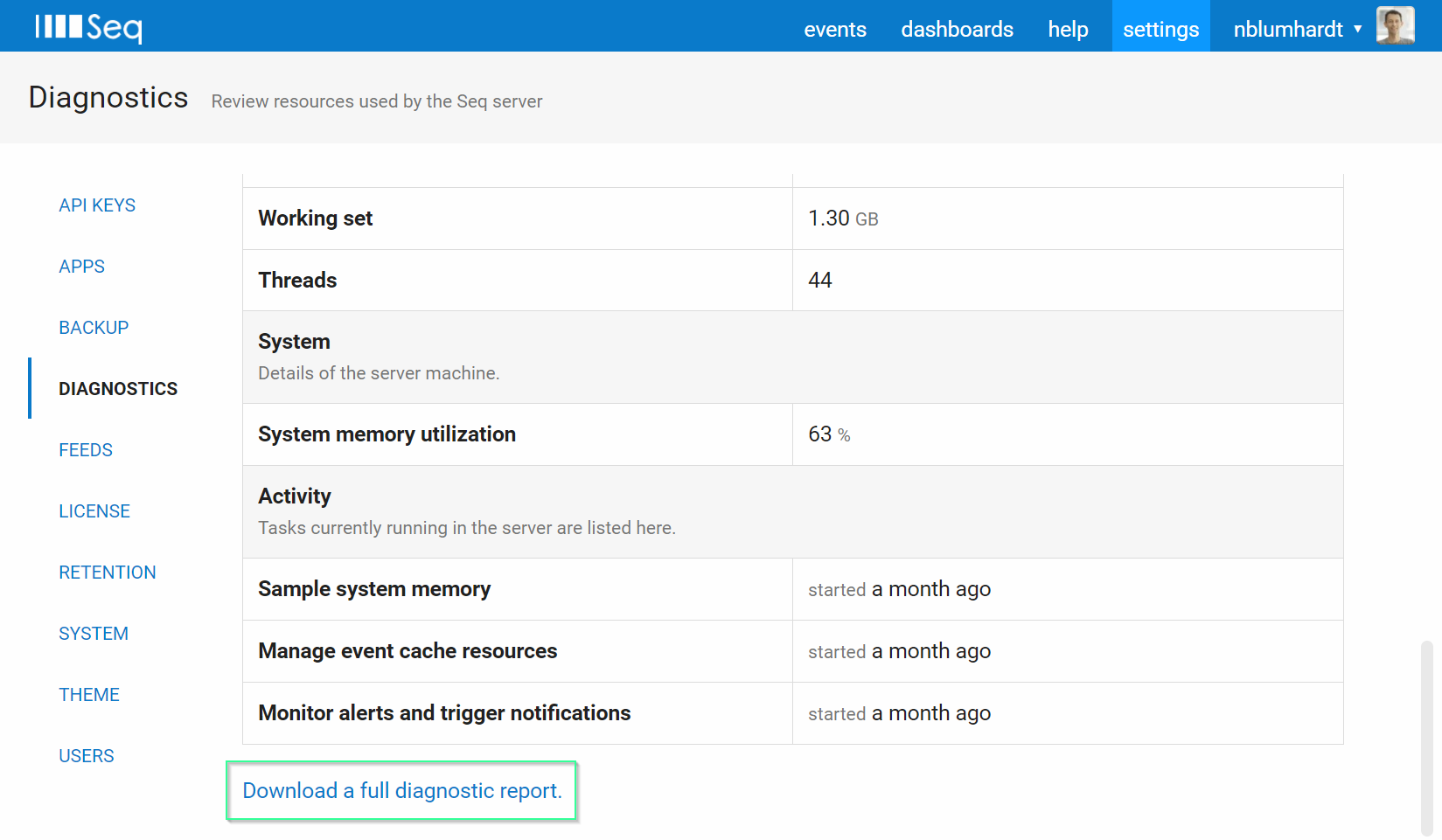

If you need to troubleshoot a performance issue with our Support Team, including the information from the Settings > Diagnostics page will help us help you faster.

You can download this information as a report, including recent internal logs from the Seq server, using the link at the bottom of the diagnostics page:

An email contact is listed on our support page.

Updated 5 months ago